In the current landscape of digital media production, the most significant bottleneck for creators is often not the visual component, but the auditory one. Sourcing high-quality, royalty-free background tracks that perfectly match the emotional pacing of a video can consume hours of valuable editing time. This friction has led to a surge in the adoption of algorithmic composition tools, where a specialized AI Music Generator serves as an on-demand composer. By shifting the paradigm from searching through static libraries to generating dynamic assets, content producers can now maintain a consistent creative flow, ensuring that their audio requirements are met with precision and speed that was previously unattainable without a dedicated studio team.

Constructing Narrative Depth Through Lyric To Song Synthesis

The true power of this technology lies in its ability to understand and interpret the semantic weight of written language. Unlike early iterations of audio synthesis that merely looped pre-recorded samples, the current generation of engines builds composition from the ground up based on textual prompts. This is particularly evident in the “Lyric to Song” functionality, where the system analyzes the rhythmic structure of user-provided verses and generates a vocal melody that aligns with the chosen genre. In my analysis of the output, the V4 model demonstrates a sophisticated grasp of musical phrasing, capable of stretching syllables and adding melismatic runs that mimic human emotive performance.

Defining Sonic Identity With Precise Style And Mood Control

Creators often struggle to find music that sits in the specific emotional pocket required for a scene—music that is energetic but not distracting, or melancholic without being depressive. The platform addresses this through a granular control system. Users are not limited to broad categories; they can combine descriptors to create niche hybrid genres. For instance, requesting a “cyberpunk jazz fusion with rain sounds” yields a result that strictly adheres to those parameters. This level of customization allows for the creation of a unique sonic identity, ensuring that a brand or channel sounds distinct from competitors who rely on the same overused stock audio files found on generic marketplaces.

Navigating The Technical Nuances Of Instrumental Generation

For video editors, vocals can sometimes clash with voice-over narration. The “Instrumental Mode” is a critical feature that bypasses the vocal synthesis layer entirely, focusing the computational power on richness of arrangement and mix quality. During my testing, I observed that this mode often results in higher fidelity backing tracks, as the frequency spectrum is not crowded by a synthesized voice. This is ideal for podcasts, documentary background beds, and corporate presentations where clarity and unobtrusiveness are paramount. The ability to switch between vocal-heavy pop tracks and clean instrumental ambient pieces within the same interface streamlines the asset acquisition process significantly.

Optimizing Output Quality Through Iterative Prompt Engineering Techniques

It is important to acknowledge that the quality of the generated audio is directly proportional to the quality of the input data. The system relies on “prompts”—descriptions that guide the neural network. A vague prompt like “rock music” will yield generic results. However, utilizing specific terminology regarding instrumentation, tempo (BPM), and production style (e.g., “lo-fi,” “reverb-heavy,” “acoustic”) significantly improves the outcome. Users should view the generation process as iterative; it is common to refine the text prompt two or three times to dial in the exact sound required. This “human-in-the-loop” approach ensures that the final export aligns with the creator’s artistic vision rather than being a random artifact of the algorithm.

Implementing A Strategic Workflow For Instant Audio Generation

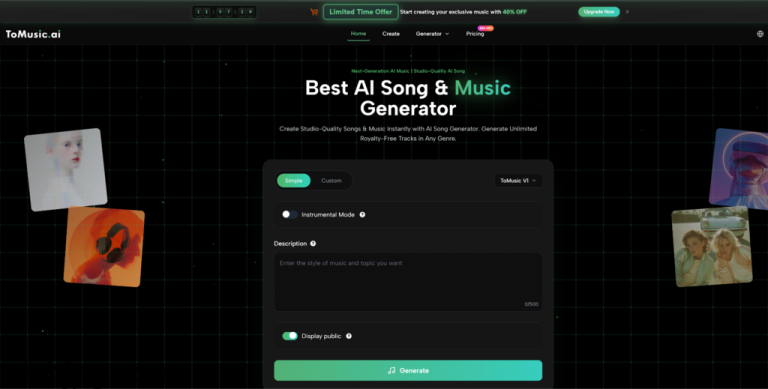

To maximize the utility of this tool, it is essential to follow a structured approach that mirrors professional production standards. The platform is designed to move the user from concept to file download efficiently, minimizing the time spent in menus. Based on the operational logic of the site, the most effective workflow involves a clear sequence of configuration and review.

Step One Establishing The Lyrical And Stylistic Foundation

The process initiates in the creation interface, where the user must decide between “Custom” or “Simple” modes. For professional results, the Custom mode is superior. Here, the user inputs the lyrics—either written personally or generated for specific themes like “Story” or “Mood”—and defines the musical style. This is the stage where the metadata for the track is established, including the title and the intended genre tags that guide the AI’s structural decisions.

Step Two Configuring Model Parameters And Generation

Once the creative direction is set, the user selects the appropriate model version. The V4 model is generally recommended for its superior audio fidelity and longer duration capabilities, supporting tracks up to several minutes in length. At this stage, users can also toggle the “Instrumental” switch if vocals are unnecessary. Clicking the generate button sends the data to the server, which typically processes the request in under a minute, creating two distinct variations of the prompt for the user to choose from.

Step Three Finalizing The Asset With Post Production Tools

Upon listening to the generated variations, the user selects the preferred iteration. For advanced users, the platform offers post-processing options such as “Extract Stems,” which separates the vocals, drums, and melody into individual files. This is crucial for remixing or fine-tuning the mix in external Digital Audio Workstations (DAWs). Finally, the track is downloaded in a high-quality format (WAV is preferred for editing) and is ready for integration into the video or audio project.

Comparing Access Tiers For Professional And Casual Creators

Understanding the distinction between casual use and professional application is vital when integrating AI tools into a business workflow. The platform separates its features based on the needs of the user, specifically regarding commercial rights and audio quality. For a content creator, the ability to monetize the final product is the deciding factor.

| Feature Comparison | Starter Plan Limitations | Unlimited Plan Advantages |

| Commercial Rights | Standard License | Full Commercial License |

| Audio Fidelity | Standard MP3 Download | High-Definition WAV & MP3 |

| Generation Queue | Standard Speed | Priority Fast Processing |

| Advanced Tools | Basic Generation Only | Stem Extraction & Vocal Removal |

Securing Long Term Value Through Commercial Licensing

The legal landscape of AI-generated content is evolving, and platform-specific licensing is the safety net for creators. The “Commercial License” included in the higher-tier plans grants the user the right to use the audio in monetized content on platforms like YouTube, TikTok, and Spotify. This eliminates the risk of copyright strikes that plague manual music selection. By adhering to the platform’s terms, creators effectively own a unique piece of intellectual property, securing their revenue streams against the volatility of copyright enforcement algorithms.

Forecasting The Integration Of Algorithmic Composition Tools

As we look toward the future of media production, tools that convert text to audio will likely become as ubiquitous as non-linear video editors. The trend suggests a move toward even greater interactivity, where the music can adapt in real-time to the visual cuts of a video. For now, the ability to generate a studio-quality song from a simple paragraph of text represents a massive leap forward in creative democratization. It allows storytellers to focus on their narrative, knowing that the soundtrack is no longer a barrier, but a customized bridge to their audience.