The evolution of generative video has historically been defined by a struggle between creativity and control. For professionals in marketing and film, the novelty of artificial intelligence creating moving images has worn off, replaced by the frustration of wrestling with tools that hallucinate physics or ignore basic cinematic rules. The industry has been waiting for a solution that functions less like a random image generator and more like a virtual production studio. This is the precise void that Seedance 2.0 aims to fill. By coupling a sophisticated Diffusion Transformer architecture with the interpretative power of the Qwen2.5 language model, this technology offers a level of directive precision that separates professional assets from experimental chaos.

Elevating Directorial Control Through Advanced Language Understanding

The primary differentiator of this model is its ability to understand the nuance of film language. Most video generators rely on simple keyword association, often resulting in visuals that are thematically correct but compositionally broken. In my observation of the Seedance 2.0 system, the integration of a dedicated Large Language Model (LLM) fundamentally changes this dynamic. It allows the system to parse complex, multi-clause instructions that dictate camera behavior, lighting interaction, and subject blocking.

Translating Technical Script Directions Into Accurate Camera Movements

When a user requests a “slow dolly zoom focusing on the subject while the background compresses,” the model understands the optical physics implied by that statement. It does not merely move the image; it simulates the lens distortion and parallax shifts associated with that specific camera move. This capability allows creators to plan sequences with intention, rather than adapting their story to whatever random camera movement the AI decides to generate.

Synchronizing Environmental Audio With Visual Physics Simulation

Perhaps the most distinct feature that sets this tool apart is its native audio synthesis engine. Unlike competitors that treat sound as an afterthought or a separate post-production step, this architecture generates audio waveforms in tandem with video frames. If the prompt describes a “stormy ocean crashing against rocks,” the model calculates the timing of the wave impact and generates the corresponding crash sound at the exact frame of contact. This multimodal synchronization creates a cohesive sensory experience that usually requires expensive foley work.

Implementing A Streamlined Workflow For High Fidelity Production

Despite the complexity of the underlying neural networks, the user interface is designed to strip away technical barriers. The operational flow mimics the logical progression of a traditional production pipeline—pre-production, technical setup, shooting, and delivery—condensed into four actionable steps.

Defining Narrative Parameters With Detailed Text Prompts

The process initiates with the “Describe Vision” phase. Here, specificity is the currency of quality. Users are encouraged to provide granular details about the scene, including texture descriptions, atmospheric conditions, and specific character actions. The system’s ability to process “Image-to-Video” inputs also allows for the use of concept art or storyboards as strict visual guidelines, ensuring the final output matches the initial creative direction.

Configuring Output Specifications For Cross Platform Distribution

The second stage is “Configure Parameters.” This is where the technical constraints are applied. Users select the resolution, with options scaling up to 1080p for professional clarity. Aspect ratios are customizable to fit specific distribution channels, such as 16:9 for cinematic viewing or 9:16 for mobile interfaces. While the base generation handles clips between 5 and 12 seconds, the system is engineered to support narrative extensions, allowing for the creation of sequences up to 60 seconds in length.

Processing Visuals And Audio Through Multimodal Synthesis

The third step is “AI Processing.” During this phase, the VAE and Diffusion Transformer work in concert to render the scene. The model prioritizes temporal consistency, calculating how light and shadow should behave over time to prevent the “flickering” artifacts common in lesser models. Simultaneously, the audio engine synthesizes the soundscape, ensuring that every visual event has a corresponding auditory cue.

Exporting Watermark Free Assets For Immediate Integration

The final stage is “Export & Share.” The resulting video is delivered as a high-definition MP4 file. Critically, these files are free of watermarks, allowing them to be immediately dropped into a Non-Linear Editing (NLE) timeline for color grading or final assembly. This friction-free handoff is essential for professional workflows where speed and flexibility are paramount.

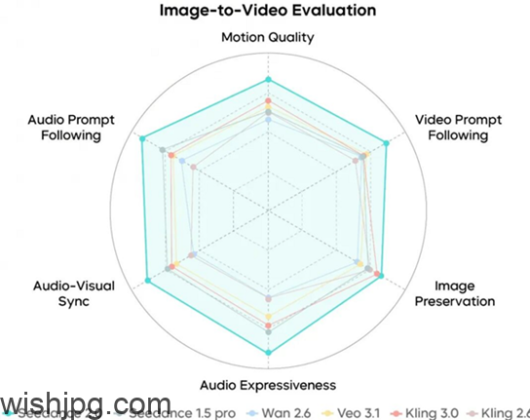

Comparing The Precision Of Seedance 2.0 Against Market Alternatives

To understand the unique value proposition of this technology, it is helpful to contrast its feature set with the general capabilities of the current generative video market. The table below illustrates the shift from random generation to controlled synthesis.

| Feature Domain | Standard Generative Models | Seedance 2.0 Specification |

| Prompt Interpretation | Keyword-based; often ignores complex syntax. | Qwen2.5 LLM integration for complex direction. |

| Audio Capability | Silent output; requires external generation. | Native, frame-synchronized audio synthesis. |

| Camera Logic | Random movement; poor understanding of optics. | Simulates real-world lens physics and motion. |

| Subject Stability | High identity drift between frames. | Consistent character identity across shots. |

| Output Duration | Typically limited to 2-4 second loops. | Extensible up to 60 seconds of narrative. |

Assessing The Impact Of Consistent Physics On Viewer Immersion

The data above highlights a crucial gap in the market that this tool addresses: reliability. For a brand telling a story, a character’s face cannot morph halfway through a sentence, and a car cannot slide sideways without wheel rotation. By enforcing stricter adherence to physical consistency and identity retention, the model moves AI video from “surreal art” to “commercial utility.”

Navigating The Practical Limitations Of The Current Technology

While the advancements are significant, it is vital to maintain a grounded perspective on the tool’s current capabilities. In my testing, while the “Director Mode” control is powerful, it is not infallible. Complex interactions between multiple dynamic objects can still result in clipping or unnatural blending. Furthermore, the generation of 1080p content with synchronized audio is computationally intensive, meaning that “instant” results are relative; high-quality rendering takes time.

Additionally, while the audio synthesis is impressive for environmental sounds and effects, the lip-syncing capability for dialogue is functional but may lack the micro-expressions found in dedicated facial capture systems. Users should view this as a powerful tool for establishing shots, B-roll, and visual storytelling, rather than a complete replacement for character-driven dialogue scenes in feature films.

Forecasting The Future Of AI In Professional Video Workflows

The trajectory of tools like this suggests a future where the role of the creator shifts from “operator” to “curator.” As the model handles the heavy lifting of physics simulation, lighting calculation, and audio synchronization, the human creator is free to focus purely on narrative structure and emotional impact. By bridging the gap between text instructions and broadcast-quality output, this technology is not just changing how videos are made; it is redefining who has the power to make them.